I’m interested in technology, but I’m not a technical person. I’m bad at maths and it’s hard for me to understand technical concepts. Sometimes I’m not sure if my brain is wired in some way that is not up to the task or if technical stuff bores me and I space out. Maybe the reason is not so important. For people like me, it’s easier to understand things when they are inside a story. This is a story about algorithmic bias. A story inspired by a real case, but from a fictional TV show: The Good Fight.

The Good Fight is a great show that speaks a lot about current events. It’s fiction, but many of its plots are based on stories we can recognise from the news. The show is from the USA and it talks mainly about their political, social, and economic issues, but most of them are common to other Western/capitalist countries.

I love the show, but I’m especially amused by the episodes that deal with topics related to the Internet, algorithms, etc. At one point, they had an episode about algorithmic bias when I was reading Weapons of Math Destruction by Cathy O'Neil, and both talked about the same lawsuit.

In case you are not familiar with the term “algorithmic bias”, it’s when a computer system generates an unfair result. For example, giving priority to a man over a woman or a white person over a racialized person.

To understand it easily, I'm going to explain what happens in episode 4 of season 2 of The Good Fight. Liz Reddick, a lawyer, is taking her son to school and finds out that Mr Coulson, the boy's favourite teacher, is being kicked out. She’s surprised because he’s a great teacher, so she talks to the school principal to find out what happened. The school principal doesn’t give her any clear explanation, but Liz learns that the teacher is going to sue the school and, as she’s a lawyer, she decides to help him.

At first, Liz thinks that someone in the school is racist or homophobic because Mr Coulson is black and gay. However, in the arbitration, the school principal explains that teachers are only dismissed on the basis of their performance. And how is their performance calculated?

An algorithm. Algorithms are logical and mathematical, and that's what schools need in order to be unbiased. The problem is that it’s not true that algorithms are unbiased. To begin with, for an algorithm to be fair it has to be based on correct and unbiased data.

As I mentioned before, this story was inspired by a real case that happened in the USA and is explained in O'Neil’s book. In 2007, in Washington, D.C., they were searching for a way to improve the quality of state schools. They decided that if children were not learning, it was the teachers’ fault, so they had to find a way to measure teachers’ performance.

With this goal in mind, they created a tool to evaluate teachers. The tool assigned them a score after assessing a series of data. If the score of a teacher was not enough, they lost their job. The idea was not so bad, a lot of people are fired for the wrong reasons, such as nepotism, so eliminating the human factor could avoid favouritism. But things are not that simple...

Among all those teachers who were fired, there was a highly valued teacher named Sarah that had never had any problems in her job and wanted to know why she was being sacked. Doing some research, she managed to find out that the reason was an evaluation system developed by a private consultancy firm.

Sarah's complaint was not an isolated case, many teachers complained for years. They find out that the cause of their dismissal was an algorithm, but most of them didn’t sue because they were intimidated by the mathematical complexity of the subject.

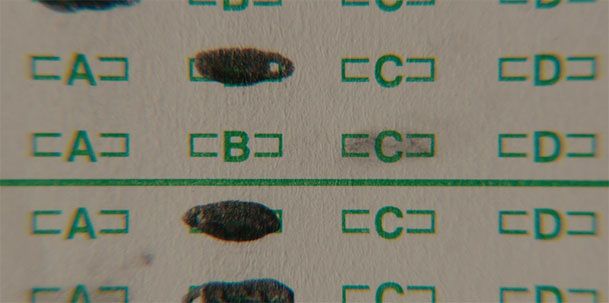

In The Good Fight, they explain that one of the main problems is that algorithms are based on data, and data is subjective and open to interpretation or even fraud. In the show, when they review the evidence they notice that many of the answer sheets from the class exams are erased.

Kids erase and rewrite a lot in exams, that’s not strange, but the lawyers suspect that there’s something fishy going on because students get better marks with teachers that they don't like.

Sarah, the real teacher who suffered this algorithmic bias, noticed something related, but not exactly the same thing. She found it strange that kids who came from other schools were unable to write or read simple sentences when their grades in those other schools were really good. The story is a bit different, but in both cases, we have bad teachers that are lying to save their asses.

Those bad teachers were correcting the kids’ answers to get them higher marks so that the algorithm would think that their performance as teachers was better. In other words, the algorithm was dismissing those who were good and honest and giving favourable treatment to those who lied.

In both fiction and real life, there’s a plot twist at the end. Mr Coulson gets his job back, but he’s offered a better-paid job at a private school. That's also what happened with Sarah. She was offered a better-paid job at a private school and left the public education system.

O'Neil's conclusion in her book is that all this algorithm nonsense was not only important for the teachers who suffered it, it’s also important for all of us because:

“…thanks to a highly questionable model, a poor school lost a good teacher, and a rich school, which didn’t fire people on the basis of their students’ scores, gained one.”

Weapons of Math Destruction, Cathy O'Neil.

This is just one of the many problems that algorithms, artificial intelligence, and other computing methods that are already ruling our lives are provoking, even if we are unaware of them. I’m not a Luddite and I’m not against AI or any other form of technology, but we have to remember that technology is never neutral or objective.

Algorithms can facilitate many tasks, but it can also cause us to be denied a job or a loan. In some countries, algorithms are already being used to decide whether or not to grant parole and other serious matters. We need to be aware of how things work. Don’t be naive, no matter how complex a system is, behind it there’re always human beings making it work—for better or worse.

By the way, The Good Fight is now in its final season, so maybe it’s a good time to watch it if you haven’t seen it. It’s not one of those eye-catching TV shows with expensive visual effects and jaw-dropping plot twists, but one that is really well-written, funny, and critical of the times we are currently living.

A couple of books about biases

My favourite book about bias is Invisible Women: Data Bias in a World Designed for Men by Caroline Criado Perez. Criado Perez explores a lot of different areas, from urban environments and medicine to public restrooms. I felt identified with a lot of the things that she explains, some can be funny, but most of them are worrisome or downright scary. This one has been translated into many languages.

If you are worried about introducing your biases in your work—I am—, this is a good place to start: Design for Cognitive Bias by David Dylan Thomas. Even if you’re not a designer, it’s a short book that explains the bias issue in simple words and what Thomas writes can be applied to other disciplines.

Biases are not only about big issues, such as sexism or racism, a lot of biases are much more subtle and it’s impossible to be aware of all of them. Cognitive biases are mainly about how we process and interpret information, and even if a lot of them are not so problematic as a racist algorithm they can end up provoking that kind of problem, so it’s important to be aware also of the smaller biases of everyday life.

I’m sure that there’re other good books. If you have any suggestions, please write a comment.

How can a biased society code an unbiased machine?

The answer to that question is that a biased society can’t code an unbiased machine. And moreover, all societies, present or future, are and will ever be biased. We can improve, but we’ll always have unconscious biases, that’s why it’s so important not to believe everything we are told and to be critical of our own preconceptions.

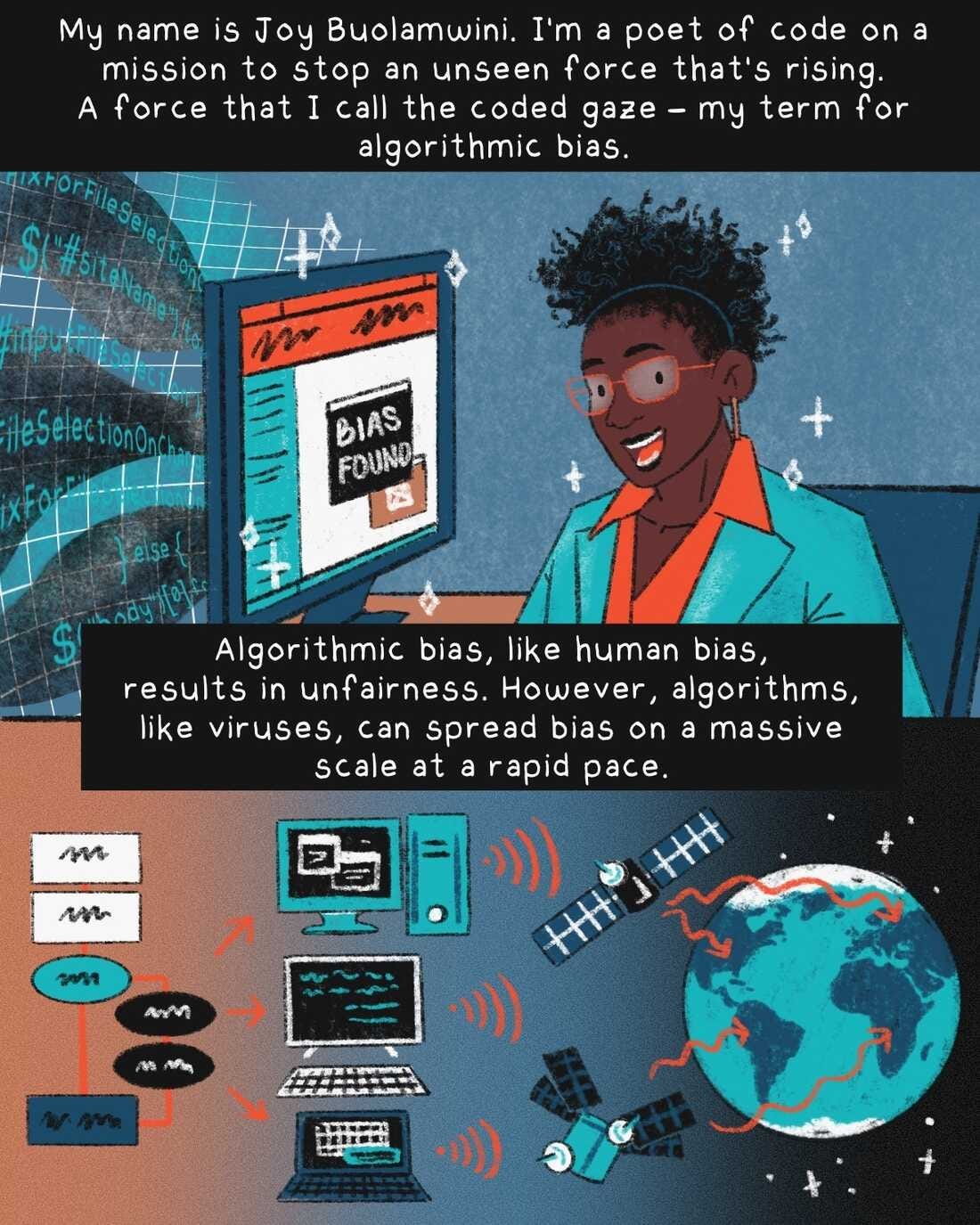

How a computer scientist fights bias in algorithms

This short comic that you can read on NPR explains the whole problem quite well. It’s simple to understand by anyone who is not into technology. Joy Buolamwini is a computer scientist who founded the Algorithmic Justice League, “an organization that combines art and research to illuminate the social implications and harms of artificial intelligence.”

This week I haven’t had much time to write because it’s Autumn again and we’re back to the standard full-time hours at my day job. I’m writing this on Friday afternoon. It’s 7:56pm and now I’m going to watch this week’s episode of The Good Fight.

Remember that this newsletter is open and free for anyone interested, so it would be great if you share it and help me to reach more people.

If you want to sponsor this and my art with something more mundane, but necessary, you can support me on ko-fi or buy some of my films, albums, etc. at my shop.

Take care of yourselves and be excellent to each other :)